|

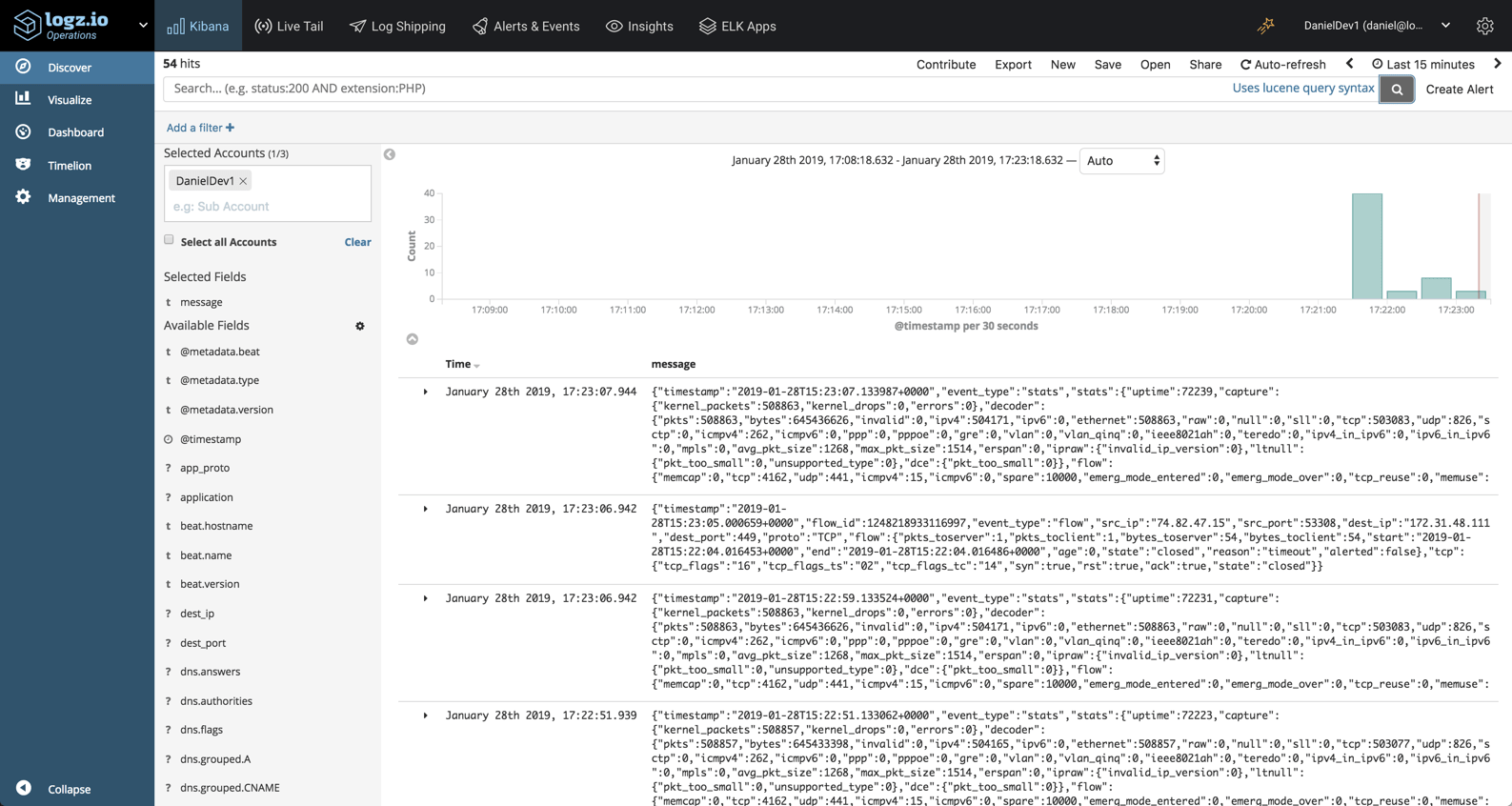

message: adding template for index patterns.įilebeat has properties that make it a great tool for sending file data to Humio. Since 7.0 JSON log files are the new default and map to: Some sample log lines from elasticsearch/logs/elasticsearchserver.json to enable the Elasticsearch module and change to the custom paths of the log files. The ELK Stack which traditionally consisted of three main and Kibana is now also used together with what is called Beats a beats varies log files in the case of Filebeat network data in the a JSON directive for parsing and a local Elasticsearch instance as the output destination. Most annoying part to me is : events: with fields Time Action Data IPAddress and Username instead of.īeats. These options do not remove the root nodes completely. The 'omit-outer-object' option with the WRITE-JSON method. SERIALZE-NAME '' on the temp table definition. I'd like each object to be an event and each value to be a field. Although it is possible to use the following to suppress node values when generating JSON: SERIALIZE-HIDDEN on the prodataset definition.

The json is an array of multpiple object. Hi all Im trying to parse a big json line into logstash. The Beats are lightweight data shippers written in Go that you install on your servers to capture all sorts of Filebeat Tails and ships log files If you need help or hit an issue please start by opening a topic on our discuss forums. Tropicalfish: Beats Lightweight shippers for Elasticsearch & Logstash elastic/beats.

To merge the decoded JSON fields into the root of the event specify target with an empty Get Started with Elasticsearch ELK for Logs & Metrics. effectively creating a dump.json file that contains all the documents that you'd normally.Ī value of 1 will decode the JSON objects in fields indicated in fields a value of 2 will By default the decoded JSON object replaces the string field from which it was read. If you're looking to actually export logs from Elasticsearch you probably want to save them You could click Request and use that as a query to ES with curl or something similar to query ES for the logs you want. One of the coolest new features in Elasticsearch 5 is the ingest node, which adds some Logstash-style processing to the Elasticsearch cluster, so data can be transformed before being indexed without needing another service and/or infrastructure to do it. fieldsunderroot : If set to true custom fields are stored in the top. Generic template Debian Systems Using configfile Logging on systems The filebeat module installs and configures the filebeat log shipper Filebeat inputs versions > 5.0 can natively decode JSON objects if they are stored one per line.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed